This study introduces HQ-Edit, a high-quality instruction-based image editing dataset with around 200,000 edits. Unlike prior approaches relying on attribute guidance or human feedback on building datasets, we devise a scalable data collection pipeline leveraging advanced foundation models, namely GPT-4V and DALL-E 3. To ensure its high quality, diverse examples are first collected online, expanded, and then used to create high-quality diptychs featuring input and output images with detailed text prompts, followed by precise alignment ensured through post-processing. In addition, we propose two evaluation metrics, Alignment and Coherence, to quantitatively assess the quality of image edit pairs using GPT-4V. HQ-Edit’s high-resolution images, rich in detail and accompanied by comprehensive editing prompts, substantially enhance the capabilities of existing image editing models. For example, an HQ-Edit finetuned InstructPix2Pix can attain state-of-the-art image editing performance, even surpassing those models fine-tuned with human-annotated data. Dataset and models are available at [HQ-Edit]

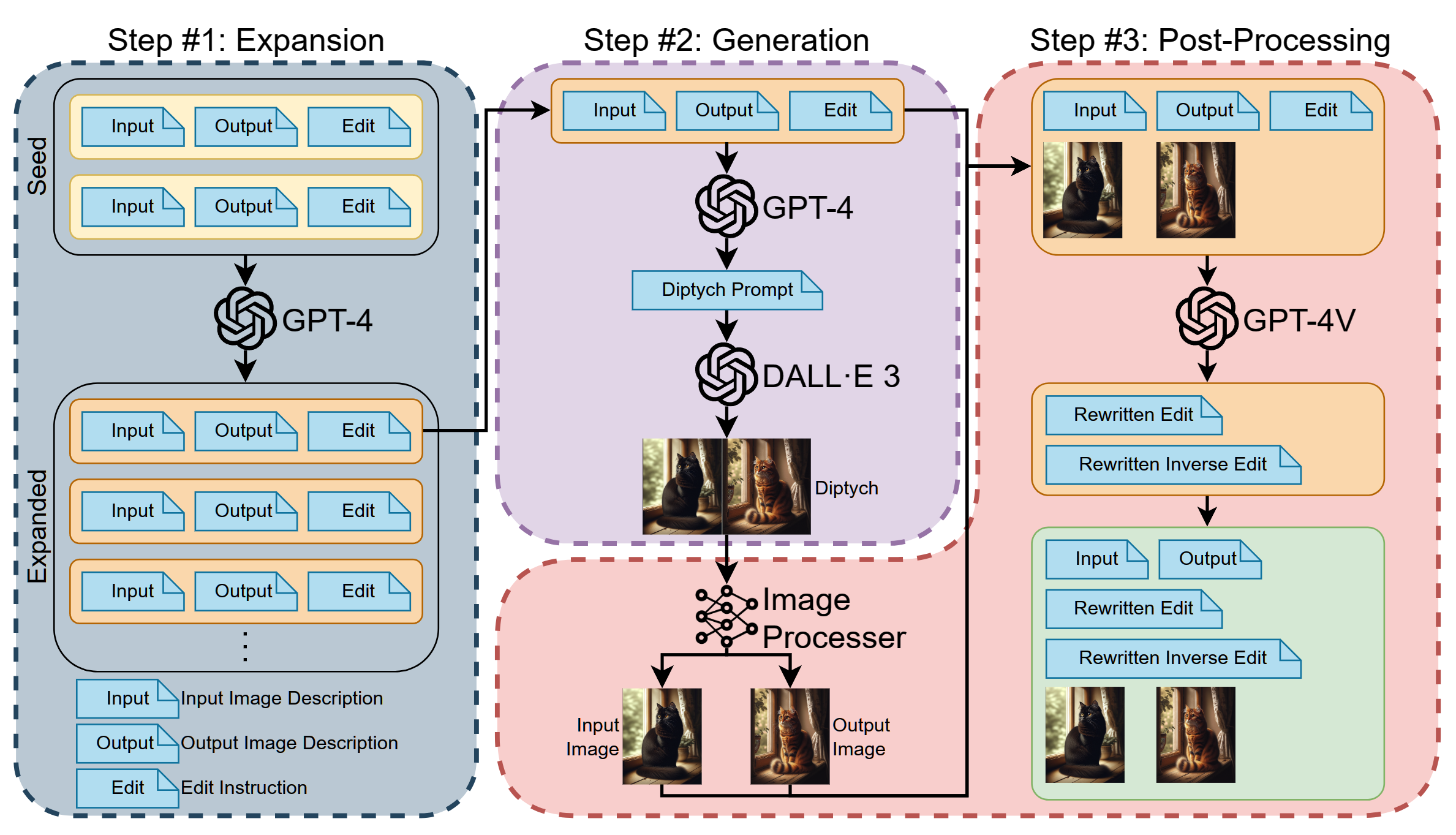

Method Overview. Our method consists of three steps: (1)Expansion: Massively generating image descriptions and edit instructions based on seed samples using GPT-4. (2)Generation: Generating diptychs using GPT-4V and DALL-E according to image descriptions and instructions. (3)Post-Processing: Post-process diptychs and edit instructions with GPT-4V and other various methods to produce image pairs and further enhance the quality of the dataset in different aspects.

HQ-Edit Data Sample. Example data sampled from HQ-Edit. Our data contains two main parts, Instruction (input, edit, inverse-edit, output) and Image (input image, output image). The two samples highlight that, 1) the image is densely packed with details, 2) the input and ouput offers a comprehensive description of the input and output image, and 3) the edit and inverse-edit instructions precisely delineate the transformations occurring between the two images.

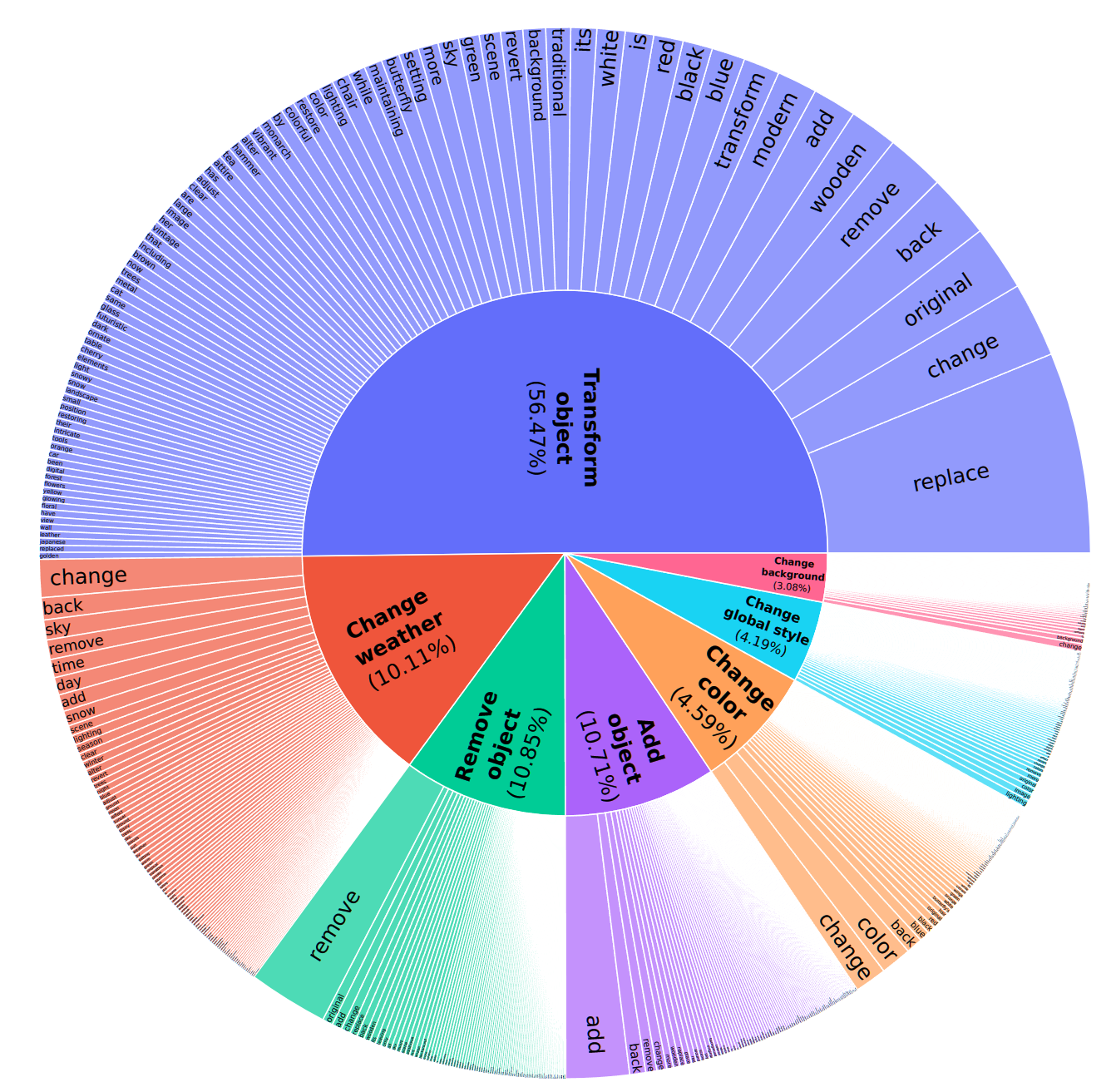

HQ-Edit Data Sample. Distribution of edit types and keywords in instructions. The inner ring depicts the types of edit instructions and the outer circle shows the frequencies of instruction keywords. This demonstrates the rich diversity contained within our instructions.

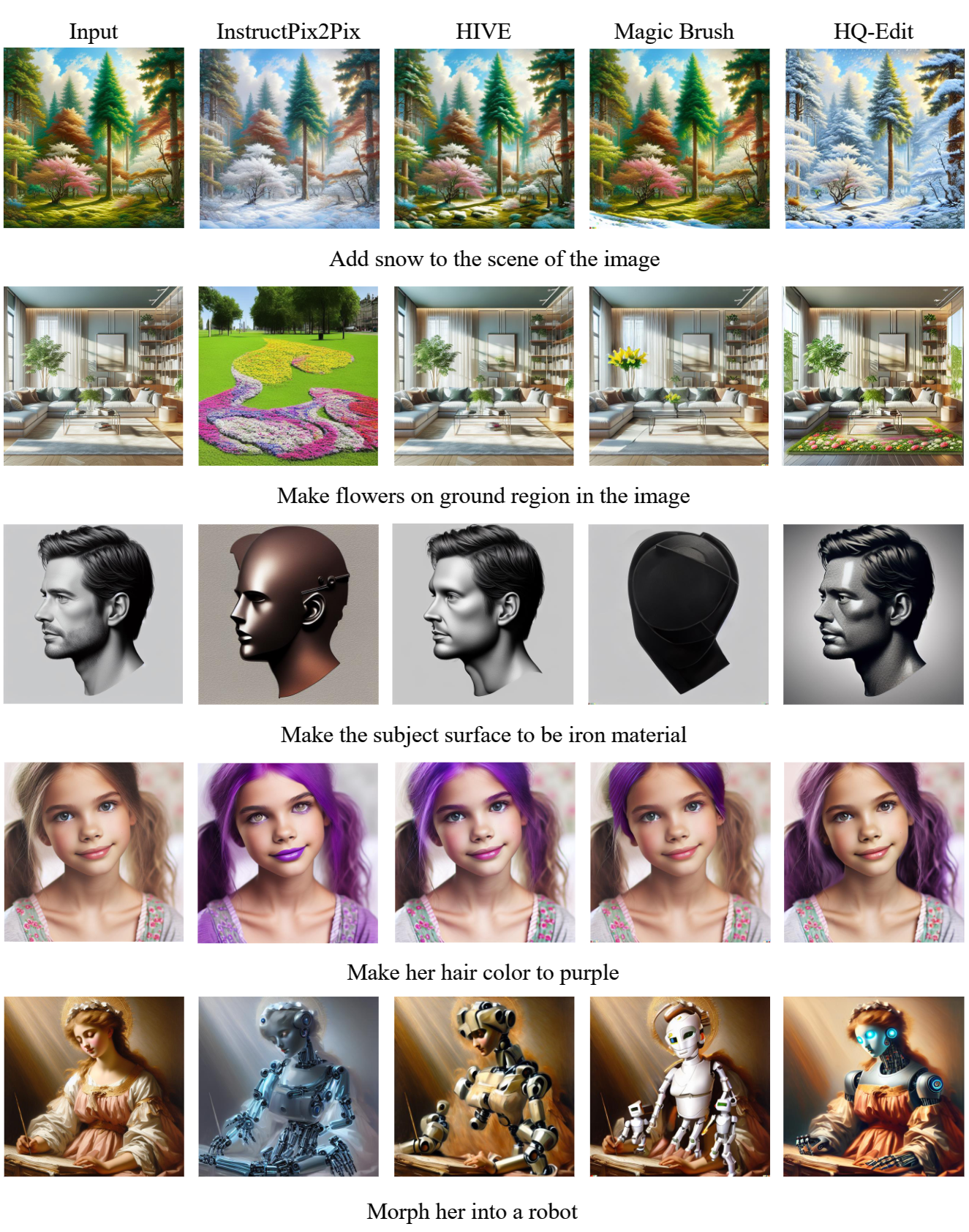

HQ-Edit Data Sample. Qualitative comparison of InstructPix2Pix, MagicBrush, HIVE and HQ-Edit. HQ-Edit demonstrates a more comprehensive diversity of editing instructions and possesses the capability to manipulate images with greater precision and detail.

@article{hui2024hq,

title = {HQ-Edit: A High-Quality Dataset for Instruction-based Image Editing},

author = {Hui, Mude and Yang, Siwei and Zhao, Bingchen and Shi, Yichun and Wang, Heng and Wang, Peng and Zhou, Yuyin and Xie, Cihang},

journal = {arXiv preprint arXiv:2404.09990},

year = {2024}

}